What Is AI As a Service?

Written by Marc Alringer

-

What is AI as a Service (AIaaS)?

The delivery of artificial intelligence capabilities as cloud-based services to utilize AI tools without extensive infrastructure or expertise. -

What are the key components of AIaaS?

Cloud-based infrastructure, machine learning algorithms, data storage and processing capabilities, APIs for integration, and tools for model training and deployment. -

AIaaS offers advantages and has some challenges?

Advantages: Cost efficiency, scalability, accessibility without extensive infrastructure, and the ability to use data analysis and machine learning technologies.Challenges: Data privacy and security, potential biases in AI algorithms, the need for skilled personnel, and regulatory uncertainties.

Time to read: 6 min

Table of Contents +

What is AI as a Service (AIaaS)?

Artificial intelligence (AI) is making waves in every sector, from powering chatbots that handle customer inquiries to algorithms that suggest your next favorite film. AI as a Service (AIaaS) is the powerhouse behind this growth, providing advanced AI capabilities to companies without requiring significant tech infrastructure or specialized AI knowledge. Leveraging advancements in data science and AI, AIaaS simplifies complex data architecture challenges, enabling businesses to focus on innovation rather than infrastructure.

Think of AIaaS as a highly intelligent robot that becomes part of your team directly from the cloud, ready to get to work. It’s crafted to make tasks more efficient, inspire innovative thinking, and propel businesses into the future. This model is especially advantageous for companies looking to explore AI's potential without fully committing to an in-house team of data science experts.

Big names like OpenAI and Meta are leading the charge, releasing AI tools that can do everything from drafting emails to creating virtual worlds. OpenAI’s Sora, for instance, generates stunning and realistic videos from simple text prompts, while Meta's AI research is paving the way for virtual assistants that could one day manage your digital life. This wave of AIaaS is opening doors for businesses to reimagine what's possible, whether it's automating routine tasks or dreaming up products that we haven't even thought of yet.

AIaaS in the Current Technological Landscape

Recently, AIaaS has been a game-changer for businesses aiming to create their own custom chatbots. With user-friendly platforms like OpenAI's Playground and Google's AI Studio, anyone can now design a personalized chatbot akin to a DIY project — all without needing a background in tech. This democratization of technology empowers businesses to provide personalized customer interactions at scale and without a hefty price tag.

You’re likely familiar with AI innovations such as GPT-4 by OpenAI, renowned for its human-like conversation and writing capabilities, or Midjourney, which impressively turns text descriptions into detailed digital art and designs.

These innovations are just the beginning of the vast array of AIaaS tools transforming the business landscape. More than just sophisticated tech, these services are becoming integral team players, revolutionizing industries across the board.

Learn more about Google Deepmind's Latest Achievements

How AIaaS Works

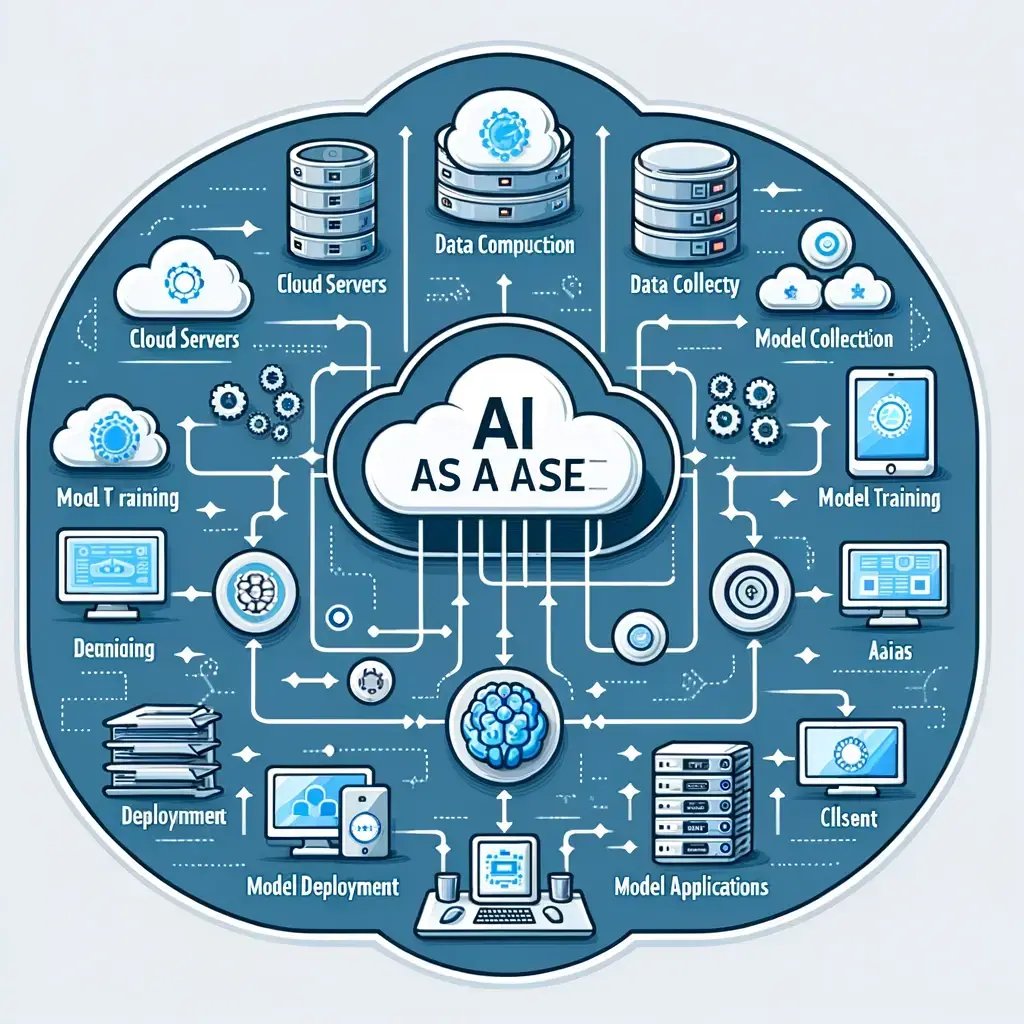

At its core, AI as a Service operates on the principle of delivering artificial intelligence capabilities through cloud computing infrastructure. Cloud platforms serve as the foundation, offering the computational power, storage, and scalability required for deploying AI models.

Machine learning, a cornerstone of AIaaS, play a central role in the service's functionality. These algorithms process vast datasets, learn patterns, and make predictions or recommendations based on the information provided.

The flexibility of AIaaS enables businesses to leverage an array of machine learning tools and techniques, from natural language processing (NLP) for understanding textual data to computer vision for interpreting visual information.

Data processing and storage are key components of the AIaaS workflow. High-quality, well-organized data is essential to AI models, and AIaaS providers excel in managing data processing and storage, ensuring that the AI algorithms get the necessary inputs to provide meaningful outputs.

In essence, AIaaS makes artificial intelligence more accessible to organizations of all sizes and industries. This way, more companies can harness its transformative potential.

Through the synergy of cloud computing services, machine learning models, and efficient data management, AIaaS empowers businesses to unlock new possibilities, driving innovation and starting a new era of intelligent solutions.

Key Components of AIaaS

Cloud Computing Infrastructure

Role of Cloud Platforms in Hosting AI Service

In the realm of AI as a Service (AIaaS), cloud platforms play an important role in providing the infrastructure needed for hosting a machine learning service, and delivering intelligent solutions. These platforms, such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud, offer a strong foundation that allows businesses to access the computational power, storage, and scalability required for deploying AI models.

Cloud platforms serve as the backbone of AIaaS by offering a flexible and dynamic environment for hosting machine learning algorithms. They provide the resources needed to process extensive datasets, have deep learning algorithms train complex models, and execute AI-driven applications. This approach removes the need for organizations to invest heavily in in-house hardware.

Benefits of Cloud Infrastructure for AIaaS

-

Scalability: Cloud infrastructure allows businesses to scale their AI operations. Whether handling a surge in computational demands or expanding AI applications, the scalability of cloud platforms ensures that organizations can adapt to evolving requirements without significant disruptions.

-

Cost Efficiency: AIaaS typically offers a pay-as-you-go model similar to cloud providers. This means organizations only pay for the resources they use, avoiding unnecessary upfront spending. This cost-efficient approach makes AI capabilities accessible to businesses of all sizes.

-

Accessibility and Collaboration: Cloud-based AI services facilitate accessibility from anywhere with an internet connection. This not only enhances collaboration among geographically dispersed teams but also allows for the integration of AI into various business processes, promoting efficiency and innovation.

-

Resource Optimization: Cloud platforms provide advanced tools for resource management and optimization. Organizations can allocate resources based on demand, ensuring optimal performance and cost-effectiveness. This flexibility is crucial for adapting to the dynamic nature of AI workloads.

Machine Learning Algorithms

The role of machine learning models in AIaaS

In the world of AI as a Service (AIaaS), machine learning (ML) stands as the main force behind intelligent solutions. Machine learning models empower AIaaS by allowing systems to learn patterns from data, make predictions, and continuously improve without specific programming. The essence of machine learning in AIaaS lies in its ability to handle vast datasets and derive meaningful insights, using them to inform intelligent decision-making and automation.

AIaaS leverages machine learning models to interpret data, recognize patterns, and generate predictions or recommendations. This capability is essential across various industries, ranging from healthcare and finance to retail and manufacturing. By incorporating machine learning into AIaaS, organizations can use data-driven intelligence to enhance their operations, deliver personalized experiences, and optimize decision-making processes.

.%20The%20diagram%20should%20illustrate%20how%20machine%20lear.webp?width=743&height=743&name=DALL%C2%B7E%202024-05-07%2014.08.05%20-%20A%20detailed%20educational%20diagram%20focusing%20on%20the%20role%20of%20Machine%20Learning%20within%20AI%20as%20a%20Service%20(AIaaS).%20The%20diagram%20should%20illustrate%20how%20machine%20lear.webp)

Types of machine learning algorithms commonly used

-

Supervised Learning: In this approach, the algorithm is trained on a labeled dataset, where the input data and corresponding output are provided. The model learns to associate the correct inputs with the correct outputs, making it suitable for tasks such as classification and regression.

-

Unsupervised Learning: Unsupervised learning involves working with unlabeled data, where the algorithm identifies patterns or structures without predefined outputs. Clustering and dimensionality reduction are common applications, helping to discover hidden relationships within the data.

-

Reinforcement Learning: This type of learning is based on an agent interacting with an environment and learning from the consequences of its actions. The algorithm receives feedback in the form of rewards or penalties, allowing it to optimize its decision-making over time. Reinforcement learning is particularly valuable in dynamic and interactive scenarios.

-

Natural Language Processing (NLP): NLP algorithms enable machines to understand, interpret, and generate human language. Sentiment analysis, language translation, and chatbots are examples of applications where NLP plays a crucial role in AIaaS.

-

Computer Vision: Computer vision algorithms allow machines to interpret and analyze visual information from the world, making sense of images or videos. Object detection, facial recognition, and image classification are common applications of computer vision in AIaaS.

Seamgen Pro Tip: We specialize in seamlessly integrating AI technologies into business operations, similar to how top industry players use AIaaS to enhance efficiency and customer engagement. Our expertise helps businesses harness the full potential of AI, transforming challenges into opportunities for innovation and growth.

Data Processing and Storage

Importance of data in AI models

In the context of AI as a Service (AIaaS), data serves as the foundation upon which intelligent models and the machine learning services are built. Data in AI models is incredibly important since it is the raw material that fuels a machine learning model, enabling them to learn patterns, make informed decisions, and generate meaningful insights.

-

Training Data Quality: The quality of training data directly determines the performance of AI models. High-quality, diverse datasets contribute to the robustness and accuracy of machine learning models, ensuring they can generalize well to new, unseen data.

-

Bias Mitigation: Data quality is crucial for addressing biases in AI models. Biased or incomplete datasets can lead to biased outcomes. AIaaS providers prioritize data quality and employ techniques to identify and reduce biases, promoting fairness and equity in AI applications.

-

Continuous Learning: As AI models evolve, the continuous stream of relevant data becomes paramount. Up-to-date data enables models to adapt to changing conditions, ensuring they remain effective and relevant over time.

How data processing and storage are managed in AIaaS

-

Data Preprocessing: Before feeding data into AI models, preprocessing is essential. This involves cleaning and organizing the data, handling missing values, and normalizing features. AIaaS providers use robust preprocessing pipelines to ensure that input data is in a format that fosters effective machine learning.

-

Scalable Storage Solutions: Given the amount of data involved in AI applications, scalable storage solutions are crucial. Cloud-based storage allows AIaaS providers to handle large datasets efficiently, facilitating seamless access and retrieval of information as needed.

-

Distributed Computing: To process massive datasets efficiently, AIaaS leverages distributed computing frameworks. By distributing computational tasks across multiple nodes, providers can achieve parallel processing, reducing the time required for data processing and model training.

-

Data Security Measures: With the increasing focus on data privacy and security, AIaaS providers implement robust measures to safeguard sensitive information. Encryption, access controls, and compliance with data protection regulations are integral components of data management in AIaaS.

Marc Alringer

Chief Revenue Officer/Founder, Seamgen

Marc Alringer, the visionary President and Founder of Seamgen, has been at the forefront of digital transformations, specializing in web and mobile app design and development. A proud alumnus of the University of Southern California (USC) with a background in Biomedical and Electrical Engineering, Marc has been instrumental in establishing Seamgen as San Diego's top custom application development company. With a rich history of partnering with Fortune 500 companies, startups, and fast-growth midsize firms, Marc's leadership has seen Seamgen receive accolades such as the Inc 5000 and San Diego Business Journal’s “Fastest Growing Private Companies”. His expertise spans a wide range of technologies, from cloud architecture with partners like Microsoft and Amazon AWS, to mobile app development across platforms like iOS and Android. Marc's dedication to excellence is evident in Seamgen's impressive clientele, which includes giants like Kia, Viasat, Coca Cola, and Oracle.

Applications of AIaaS

Industry-specific Use Cases

Healthcare

In healthcare, AIaaS is making significant strides in enhancing patient care and operational efficiency. Beyond assisting medical professionals in analyzing medical data for quicker decision-making, AI is pivotal in emerging technologies like Neuralink. Founded by Elon Musk, Neuralink is at the forefront of developing high-bandwidth brain-machine interfaces. Although still in its early stages, this technology shows immense promise for treating neurological disorders and integrating artificial intelligence directly with human cognition. As it matures, expect Neuralink to become a major player in the tech industry, potentially revolutionizing how medical practitioners diagnose and manage neurological conditions.

Transporation and Automotive

In transportation, AIaaS is contributing to the development of autonomous vehicle technology, including drones and self-driving cars like Tesla. It’s also optimizing route planning for delivery services, which is essential for companies like Amazon and UPS. These AI systems analyze traffic data in real-time to identify the quickest, most fuel-efficient routes.

Entertainment and Media

The entertainment sector is seeing AIaaS-curated content to enhance viewer experience. Algorithms can suggest movies and shows based on viewing history, and tools like Sora and Midjourney are even capable of creating music and art, which is stirring both excitement and debate in the creative world. AI-driven analytics are also optimizing advertising by predicting viewer preferences and behaviors.

Finance

Voice assistant AI has been around for quite some time now. When you call your bank, you're routed to an automated directory that allows you to make selections while “chatting” with a robot. These chatbots provide 24-hour “live service,” and allow workers to spend more time on non-routine issues.

Retail

AI has also transformed the way retail cameras are used in stores. Previously used to provide evidence for issues such as workplace abuse and theft, AI has enabled cameras in retail to keep track of customer demographics and customer habits.

Manufacturing

AI as a Service (AIaaS) is reshaping the manufacturing industry, introducing innovative solutions that optimize processes and improve operational efficiency. One application is predictive maintenance, where AI leverages real-time data from equipment sensors to predict potential machinery failures.

This approach minimizes downtime, cuts maintenance costs, and ensures uninterrupted production, aligning manufacturing with the efficiency-driven principles observed in healthcare's predictive diagnostics.

Common AIaaS Applications

Natural Language Processing (NLP)

AI as a Service (AIaaS) has become the buzzword, especially with NLP's evolution, all thanks to advanced Large Language Models (LLMs) like those from OpenAI. These technologies have taken conversations with machines to a new level, enabling them to engage in dialogue almost indistinguishable from human interaction. Think of customer support bots that not only solve problems but do so with a sense of understanding, or analytical tools that dive into the depths of data to detect emotional undercurrents in text.

NLP is revolutionizing the way businesses communicate, providing a personalized touch to digital interactions that customers value. It’s transforming the once mechanical exchanges into warm, engaging conversations, paving the way for a new era of customer relationship management.

WHO ARE WE? EXPERTS IN ARTIFICIAL INTELLIGENCE SOLUTIONS

- At Seamgen, we boast over a decade of experience aligning client visions with the most effective AI technologies for their unique challenges

- We partner with clients ranging from start-ups to fortune 500 clients, creating cutting-edge AI solutions that drive success.

-

USA Design Led Development Agency based in San Diego, CA.

- We invite you to call us for a free project consultation.

Computer Vision

Enter the realm of AI where machines gain sight, interpreting the visual world as humans do, through the lens of Computer Vision. This facet of AIaaS has made its way into the automotive industry, with companies like Tesla leveraging it to pioneer self-driving cars. By processing visual data in real-time, these vehicles can navigate roads, recognize obstacles, and make split-second driving decisions, all without human intervention.

Beyond the road, computer vision is integral to manufacturing and retail. It fine-tunes quality control with precision and reshapes shopping experiences by understanding customer behaviors and preferences. In healthcare, it assists in diagnosing diseases from images with astonishing accuracy. Computer vision in AIaaS isn’t just an advancement; it’s a gateway to a future where technology sees and understands the world, helping us make smarter, safer, and more personalized choices.

Predictive Analytics

Imagine being able to predict the future. That's almost what predictive analytics in AIaaS offers, thanks to the brainy power of LLMs. These deep-thinking models chew through data, spot trends, and forecast what's next. They're a crystal ball for everything from financial markets to patient health outcomes, providing a clearer picture of the future by reading between the lines of vast amounts of text.

This is where the excitement lies today — in the ability to forecast with a level of precision that was once the stuff of science fiction. For businesses, it's about gaining a head start, whether that's knowing market shifts before they happen or personalizing healthcare like never before. With LLMs, predictive analytics is not just reacting to the future; it's shaping it.

Advantages of AIaaS

Cost Efficiency

One of the main advantages of AI as a Service (AIaaS) is its cost efficiency. By adopting a pay-as-you-go model, organizations can optimize their budget, paying only for the AI resources they use. This cost-effective approach makes advanced AI capabilities accessible to businesses of all sizes.

Scalability

Scalability is a key strength of AIaaS, allowing organizations to scale their AI operations seamlessly. Whether experiencing a sudden surge in demand or expanding AI applications, the scalability of cloud-based AI based services ensures that computational resources can be adjusted to meet changing needs. This flexibility is instrumental for businesses aiming to adapt quickly in dynamic environments.

Accessibility

AIaaS enhances accessibility by providing on-demand access to advanced AI capabilities. Organizations can leverage these services without the need for extensive in-house infrastructure or specialized expertise. This improved AI accessibility empowers businesses to harness the potential of artificial intelligence, regardless of their size or industry.

Faster Implementation and Deployment

The swift implementation and deployment of AI solutions are facilitated by AIaaS. Leveraging cloud-based infrastructure, organizations can speed up their integration of AI capabilities into existing systems. This rapid deployment not only accelerates time-to-market for AI applications but also allows businesses to stay agile and responsive to emerging opportunities and challenges in their industries.

Challenges and Considerations

Data Security and Privacy

Data security and privacy are critical challenges in the realm of AIaaS. As organizations use cloud-based AI services, ensuring the protection of sensitive data becomes imperative. Robust encryption, access controls, and compliance with data protection regulations are essential to reduce risks and build trust with users.

Ethical Concerns

Ethical considerations in AIaaS involve the responsible use of artificial intelligence. Issues such as bias in algorithms, fairness, and transparency must be addressed. AI developers and organizations utilizing AIaaS need to prioritize ethical guidelines, ensuring that AI applications do not perpetuate discrimination or harm societal values.

Integration with Existing Systems

Integrating AIaaS with existing systems poses a challenge due to compatibility issues. Legacy systems may not play well with cloud-based AI services, requiring careful planning and potential system upgrades. Ensuring a smooth integration process is crucial to maximize the benefits of AIaaS without disrupting existing operations.

Key AIaaS Providers

Overview of major AIaaS providers

Several major players dominate the AI as a Service (AIaaS) landscape, each offering unique strengths and capabilities. Amazon Web Services (AWS), a pioneer in cloud services, provides a comprehensive suite of AI tools, including Amazon SageMaker for various machine learning tasks.

Microsoft Azure boasts a robust AI platform with services like Azure Machine Learning and cognitive services.

Google Cloud Platform (GCP) stands out with its TensorFlow machine learning framework and diverse AI offerings, while IBM Watson provides AI solutions for enterprises, emphasizing natural language understanding and machine learning capabilities.

Comparison of services offered by different providers

When comparing AIaaS providers, organizations should consider factors such as the range of services, ease of integration, and pricing models. AWS, for example, excels in providing a wide range of AI and machine learning services, suitable for various applications.

Microsoft Azure's seamless integration with Microsoft products and services is advantageous, while Google Cloud Platform's focus on machine learning services and TensorFlow appeals to developers.

IBM Watson emphasizes industry-specific solutions and NLP capabilities. Evaluating these aspects allows businesses to choose the AIaaS cloud provider aligned with their specific needs and goals.

Future Trends in AIaaS

Evolution of AIaaS technologies

As we delve into the evolution of AI as a Service (AIaaS) technologies, notable advancements are shaping the landscape. From improved NLP capabilities to enhanced computer vision algorithms, AIaaS is becoming better at understanding the human brain, learning, and reasoning.

The evolution also encompasses the refinement of predictive analytics models, allowing for more accurate forecasts and strategic decision-making.

Emerging applications and industries

IBM's Watson, renowned for dominating Jeopardy, has evolved into a suite of service AI offerings, showcasing the expanding applications of AIaaS. Watson Virtual Agent, Explorer, Analytics, and Knowledge Studio demonstrate the versatility of AI in addressing diverse industry needs.

Specifically, in the Internet of Things (IoT) realm, Watson's machine learning capabilities enable seamless connection and data processing from various interconnected devices, fostering a new era of intelligent IoT applications.

Providing a vision of the future, CrowdAI co-founder Nic Borensztein stated:

"The world is moving towards automation, robotics, and on-demand services. The biggest barrier to getting there is creating the bridges that allow computers to interact with the world. There is an enormous expansion of the economy that's going to come next."

- Nic Borenstein, CrowdAI

Anticipated advancements in AIaaS

Looking ahead, the anticipated advancements in AIaaS are poised to redefine industries. As technology continues to mature, we can expect even more sophisticated AI models, more machine learning expertise, improved automation, and increased collaboration between AI and human professionals.

Ethical considerations and responsible AI practices are likely to play a more significant role, shaping the trajectory of AIaaS towards a future that prioritizes fairness, transparency, and positive societal impact.

Wrapping Up

In the evolving landscape of AI as a Service (AIaaS), the synergy between artificial intelligence and cloud computing is revolutionizing how industries leverage advanced technologies. The integration of Large Language Models (LLMs) into AIaaS platforms has been particularly transformative, enabling a leap forward in machine learning models' capabilities, from enhancing NLP to refining predictive analytics.

AIaaS democratizes access to these cutting-edge technologies, breaking down traditional barriers associated with infrastructure constraints. Organizations of all sizes benefit from the cost efficiency, scalability, and rapid deployment that AIaaS offers, while also navigating the challenges of data security, ethical considerations, and seamless system integration.

The role of major AIaaS providers, including AWS, Azure, GCP, and IBM Watson, has been instrumental in this transformation, offering diverse solutions that cater to a wide range of business needs. As we look to the future, the continued advancement of LLMs within AIaaS promises to further enhance our ability to generate human-like text, understand complex data patterns, and make more accurate predictions, leading to smarter, more responsive applications.

The journey towards automation, robotics, and on-demand services, enriched by the capabilities of LLMs, holds the promise of untold possibilities and economic expansion. This transformative era, where innovation meets responsibility, underscores the importance of ethical AI practices and collaborative partnerships with intelligent systems, defining a future where AI serves as a cornerstone of growth and societal advancement.

THANKS FOR READING!

Now you've been exposed to AIaaS, and we hope you enjoyed the ride. Want more from the Seamgen blog? We have you covered:

Seamgen's Data Science and AI Solutions

Figma MCP: Complete Guide to Design-to-Code Automation

Smart Cities: A Complete Crash Course